Restart a node in AKS

Sometimes, single nodes in your AKS clusters might behave in a strange way. Usually, the self-healing capabilities of Kubernetes should detect that and replace the node. From time to time, you might decide to restart a single node manually.

Sometimes, single nodes in your AKS clusters might behave in a strange way. Usually, the self-healing capabilities of Kubernetes should detect that and replace the node. From time to time, you might decide to restart a single node manually.

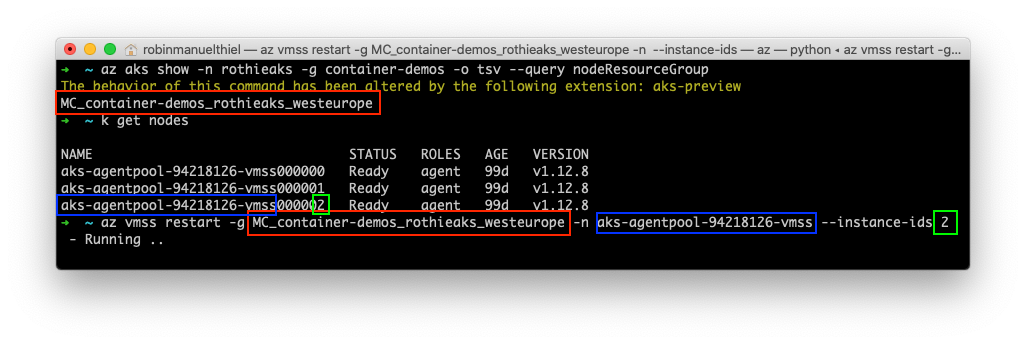

As AKS node pools are organized in Azure Virtual Machine Scale Sets, restarting a node is as easy as restarting a Virtual Machine in a Scale Set.

What if a node can't be restarted?

It rarely happens, that a node got stuck and can't be restarted easily. You can try to deallocate and restart the node with the following commands:

# De-allocate the VM

az vmss deallocate -g MC_container-demos_rothieaks_westeurope -n aks-agentpool-94218126-vmss --instance-ids 2

# Start the deallocated VM again

az vmss start -g MC_container-demos_rothieaks_westeurope -n aks-agentpool-94218126-vmss --instance-ids 2If that does not solve your problem, the last resort should be deleting the VM from the Scale Set. Removing VMs from a Scale set gets interpreted as scaling down the cluster by Azure. So Azure won't try to add a new VM to the Scale Set after you removed one.

# Remove VM from node pool scale set

az vmss start -g MC_container-demos_rothieaks_westeurope -n aks-agentpool-94218126-vmss --instance-ids 2

# Scale the AKS cluster back to its original size

az aks scale -n rothieaks -g container-demos --nodepool-name agentpool -c 3 Auto-restart nodes for updates

In case you just want to restart the cluster nodes automatically when they need a reboot after an update, you should take a look at the Kured (KUbernetes REboot Daemon) project. Kured looks for the /var/run/reboot-required file on each node and restarts it, if this file is present.

Installing Kured is as easy as applying its DaemonSet with the following command:

kubectl apply -f https://github.com/weaveworks/kured/releases/download/1.2.0/kured-1.2.0-dockerhub.yaml